(Written in advance, but published on May 2 when the relevant article is finally available.)

I’ve just finished writing a three part series on building a containerized ASP.NET Core API that uses EF Core for its data persistence. All of this was done in VS 2017 and I took advantage of the VS2017 Tools for Docker.

The article series will be in the April, May and June issues of MSDN Magazine.

Part 1: EF Core in a Docker Containerized App, Apr 2019

Part 2: EF Core in a Docker Containerized App, May 2019

But I didn’t have room to include the important task of deploying the app I’d written, although I worked hard to do it. Well, the deployment was pretty easy but there were some new steps to earn in order to deal with storing a password for making a connection to my Azure SQL database. I will relay those steps in this blog post.

My API uses EF Core and targets an Azure SQL database. So whether I’m debugging locally with IIS or Kestrel, debugging locally inside of a Docker container or running the app from a server or the cloud, I can always access that database.

That means I have a connection string to deal with but I want to keep the password a secret.

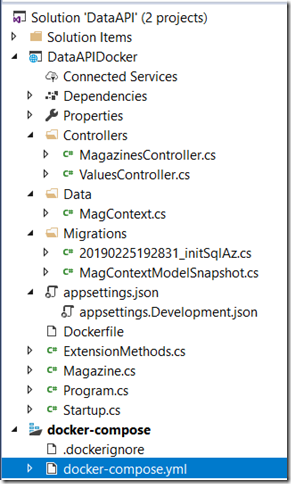

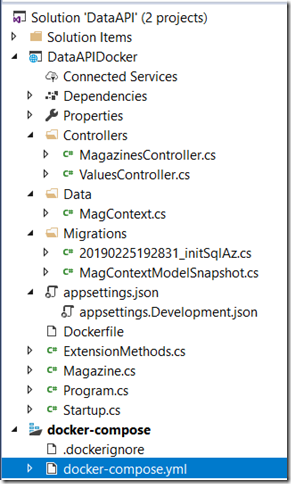

The structure of the solution is here. My ASP.NET Core API project is DataAPIDocker. And because I used the docker tools to add container orchestration, I have another folder in the solution for docker-compose.

I go into detail in part 2 of the article (the one in the May 2019 issue) but the bottom line is that I use a docker environment variable in my docker-compose.yml file.

version: ‘3.4’

services:

dataapidocker:

image: ${DOCKER_REGISTRY-}dataapidocker

build:

context: .

dockerfile: DataAPIDocker/Dockerfile

environment:

– DB_PW

In the environment mapping, I have a sequence item where I’m defining the DB_PW key but I’m not including a value. Becasue there’s no value there, Docker will look in the host’s environment variables. Because I’m only debugging, I create a temporary environment variable on my system with the value of the password and when I debug or run the app from VS2017, the password variable will be found. That environment variable gets passed into the running container and my app has code to read it and include that password in teh connection string.

So its all self-contained, nice and neat.

Publishing the Image to Azure’s ACI Registry

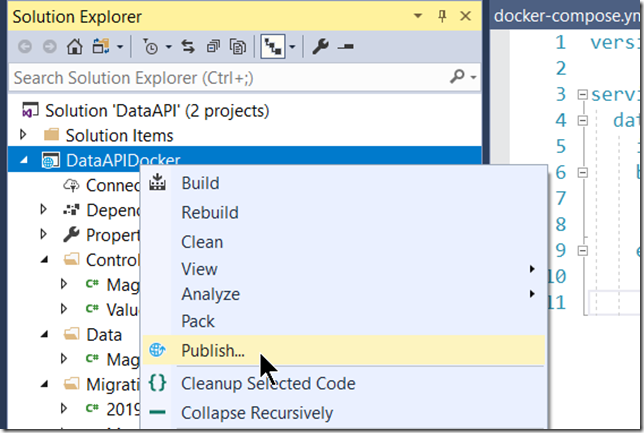

Once you’ve got the app working it’s time to publish it. But we’re using Docker, so you’re not publishing the app, but the docker image that can run the app for you. Docker tools will help with this also.

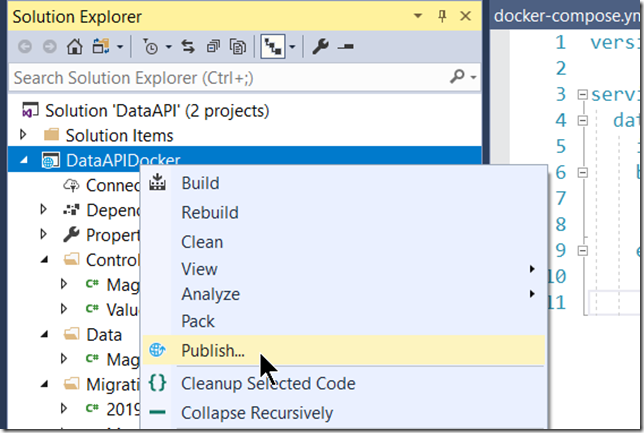

Right click the project and choose Publish.

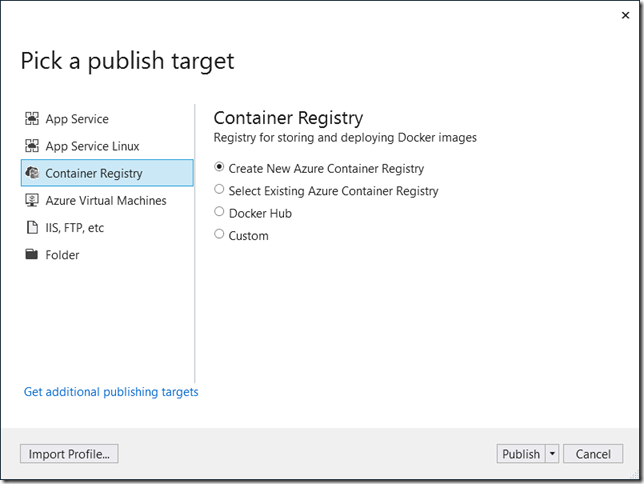

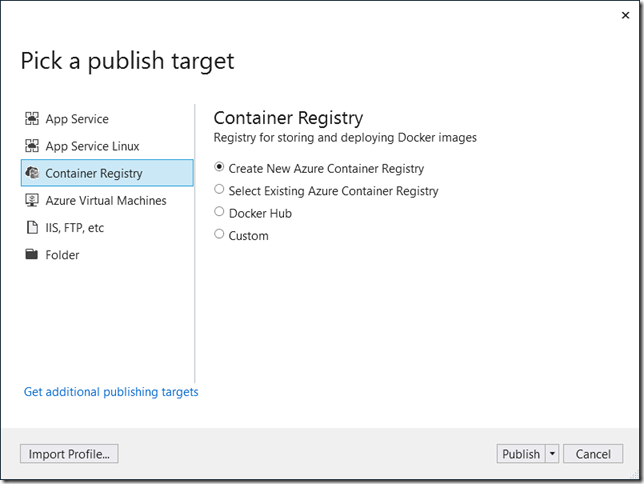

Then you will want to create a publish profile. And part of that profile is to choose where to publish the image. Here you’ve got options. I have a VIsual Studio Subscription and can publish it to an Azure Container Registry if I want or to Docker Hub or to some other registry.

My goal for this blog post is to get the image into the Azure Container Registry so that’s my choice. You can have multiple container registries in your azure account. And you can store any number of images in a single registry. Well, there may be technical or financial constraints, but the point is that you can have multiple images in a registry. I’m not here to advise on how to manage azure finances, just how to do the task.

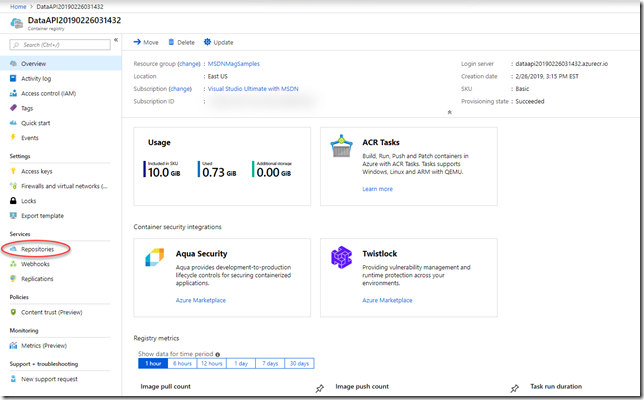

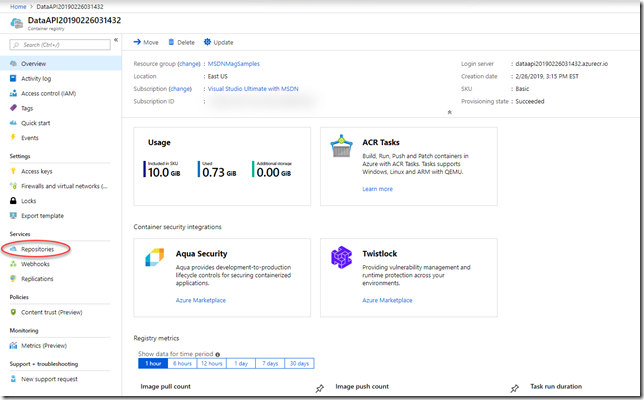

Here’s the overview page of a registry I let the publishing tool create for me. I’ve circled the link to see repositories which is where your images are accessible.

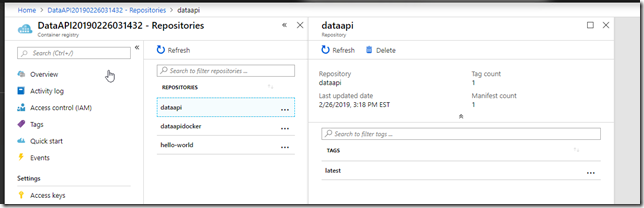

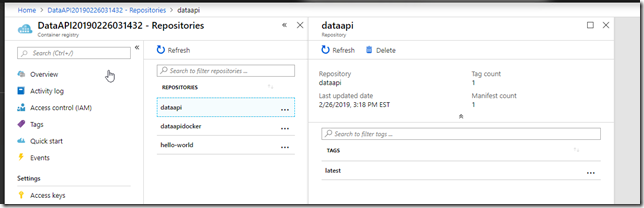

You may have different versions of a particular image so each “set” is a different repository. I have three repositories in mine where I’ve been experimenting.

The dataapi has only one image which the publishing tool automatically tagged “latest” for me. I can have other versions under different tags.

Back in Visual Studio, after walking through the publishing tool’s questions for creating a new repository, the final step is to go ahead an publish which will build the image and push it up to the target repository. Keep in mind that you’ll want VS2017 to be set to run a RELEASE build, not a DEBUG build.

If its your first time pushing this image to the repository, the tooling will also push the ASP.NET Core SDK and runtime images that are listed in the app’s Dockerfile .

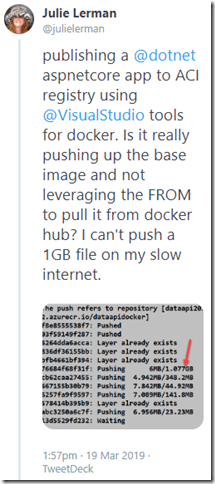

I was surprised to see this, wondering why Azure didn’t just grab them from docker hub and why I was uploading those big files directly. Naturally I tweeted my confusion:

There’s more to the story but it is beyond the scope of my goals here.

A cool feature of this registry is that you can right click and run an image. Which is fine if you aren’t trying to orchestrate a number of images and that matches my case. This image does run independently.

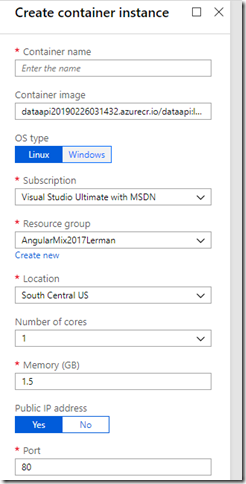

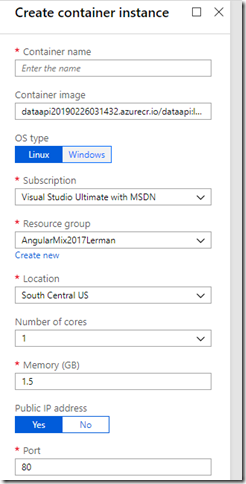

Right click on the image and choose Run instance. Azure will create a container and run it as an Azure Container Instance. Although first you need to define specs for the instance.

It’s kind of magical because you don’t have to create and manage a virtual machine to run the container on if it’s a simple application.

What About Environment Variables for the Container Instance?

The instance will run but the Magazines controller that needs to read from the Azure SQL database will fail because we haven’t provided the password which the container expects to be provided through the host’s environment variable. So for my image, right click and run wasn’t quite enough.

This is where I had to do a lot of reading, research and experimentation until I got the solution working. (Keep in mind that if I were running this on a virtual machine of my own devising, you can just pass the variable in when you manually call docker run.)

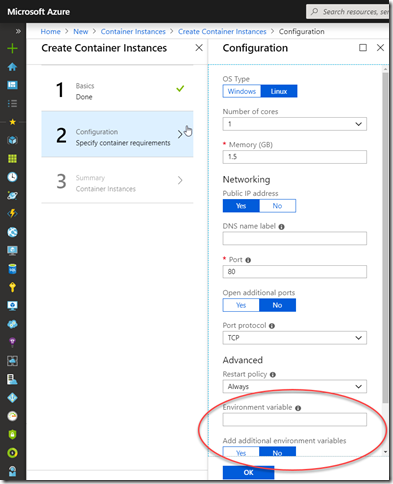

There are two ways to provide an environment variable to a container instance.

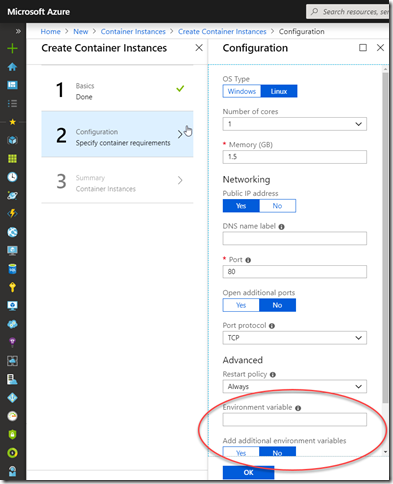

One, through the portal, means rather than right clicking the image, you need to start by creating a new container instance in Azure and pointing to the image. This path lets you assign up to 3 environment variables in the configuration:

Using an On-The-Fly Variable to Pass into the Container

I’m going to do a first pass creating an variable on the fly to pass to EnvironmentVariable. Then I’ll show you how to use the Azure Key Vault

EnvironmentVariable expects a hashtable.

Create a new variable (I’ll call it envVars in homage to the resource where I learned this) and assign a single key value pair:

$envVars = @{‘DB_PW’=’eiluj’}

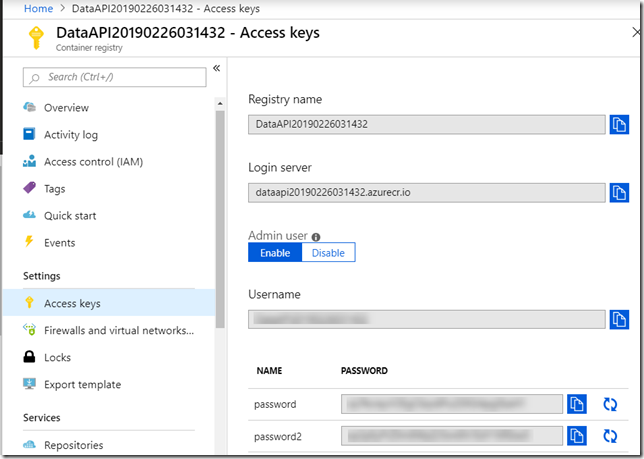

The other tricky part is providing the credentials to access the image in the registry. We don’t have to do that when using the portal to create the container because we’ve already provided them. But now I need to provide them.

You’ll need the user name and password from the repository:

Then you can use PowerShell to create a secure string from the password and then use that secure string along with the username to create a PowerShell credential object.

TIP: If you have multiple subscriptions, be sure you’re pointing to the correct one where the target resource group is.

TIP: A cool thing you can do in cloud shell is type DIR to list your subscriptions and then use CD to get into the correct one! Checkout the PowerShell Cloud Shell quick start for details

.

TIP: If like me, you mess around with the database to experience cause & effect, remember that in my sample code, the database gets migrated on app startup. In the case of having it in a container that means when the container instance is run. So if you run the container, then delete the database, you won’t see the db again until the container is spun up again. Stopping & restarting has the same effect. Of course this is just for testing things out, not production! Once again, something that had me stuck for over an hour until I had my aha moment.

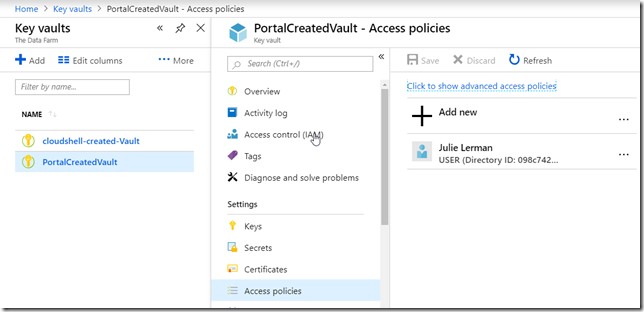

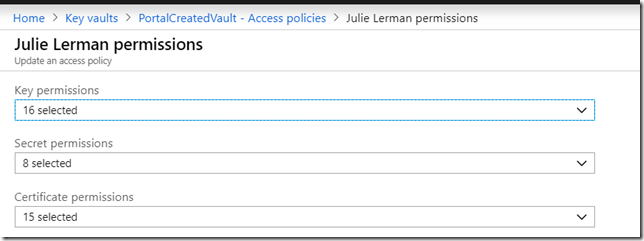

Creating a KeyVault and Adding My Secret Password